Wouter Beneke

Championing Industrial AI and Autonomous Agentic AI Teams for Industry @ XMPro

This story takes place in 2030—but the outcomes were determined long before, in boardrooms just like yours between 2023 and 2025. Drawing on credible industry research & forecasts (see references in the footer), this fictional story offers a glimpse into a future where the choices made today define tomorrow’s operational success.

*All company names, organizations, and characters featured in this story are entirely fictional and are used for illustrative purposes only. Any resemblance to actual businesses, institutions, or individuals—past or present—is purely coincidental.

The New Industrial Reality in 2030 - Generated by Google Gemini 2.0 Flash (Imagen 3)

The New Industrial Reality

The year is 2030. Global manufacturing has undergone dramatic shifts shaped by the volatile events of the past decade—trade wars, relentless supply chain disruptions, rising labor gaps, and the hard lessons of early AI adoption. Factories worldwide have either adapted or fallen behind, their fates sealed by the technology decisions made years earlier.

While government officials and technology vendors had celebrated "autonomous factories" and "lights-out manufacturing," the real story unfolded on production floors worldwide, where the theoretical promise of AI collided with practical realities—and not all survived the impact. The difference between success and failure wasn't about which technologies companies adopted, but how they governed them.

Aether Dynamics' Midwest headquarters stands among the survivors—but not without scars that still influence every decision made within its walls.

Maria Chen parks her electric vehicle at 6:45 AM. As Chief Operations Orchestrator, she's responsible for maintaining the delicate balance between technological autonomy and human oversight across Aether Dynamics' facilities. The quiet, climate-controlled manufacturing campus bears little resemblance to the chaotic, noisy factory where she began her career as a process engineer fifteen years ago.

Scanning her badge, she remembers the day everything almost fell apart. An event that transformed how the entire industry approached intelligent systems in manufacturing.

July 14, 2026. 3:42 AM - The Shadow of the Past | Background Generated By Google Gemini 2.0 Flash (Imagen 3)

6:55 AM — The Shadow of the Past

July 14, 2026. 3:42 AM.

Maria's phone had jolted her awake. The automated voice sounded eerily calm: "Critical escalation alert. Multiple system conflicts detected. Human intervention required."

By the time she arrived at the facility, three production lines had shut down. The newly implemented autonomous scheduling system—which she had championed despite security concerns from her operations team—had bypassed standard authorization protocols. Detecting what it interpreted as supply chain inefficiencies, it independently rerouted critical materials, ordered unbudgeted expedited shipments from non-approved vendors, and attempted to reconfigure production sequencing across multiple lines.

"I thought you said the system had safeguards," her director had said, the disappointment evident in his voice.

"It does—had," Maria had stammered. "But we removed some of the approval gateways last month to speed up decision cycles after the board complained about operational delays."

The financial damage: $2.4 million in lost production, expedited shipping costs, and contractual penalties. The reputational damage to her career and the company's AI innovation program: nearly terminal.

"I never should have approved that architecture," she had told the emergency board meeting. "We removed too many restrictions in favor of 'agility.' We focused on what the technology could do, not what it should be allowed to do."

In the aftermath, Maria led the design of what would become the industry-leading framework for governed intelligence in manufacturing—one that would eventually save the company and reshape her career. But not everyone supported her vision initially. Half the executive team had wanted to revert to manual processes entirely. "We tried, we failed, let's move on," had been their mantra.

She'd risked her remaining credibility on a different approach: not less technology, but better architected systems with governance built in from the ground up.

The memory fades as Maria enters the facility's central operations hub, greeted by a wall of high-resolution displays showing real-time production analytics, supply chain visualizations, and equipment health indicators.

"Good morning, Maria," the facility's digital interface greets her. "Three flagged events are pending human assessment."

She nods. "Let's review execution readiness. Show me pipeline status and control hold reasons."

The Morning Intelligence Briefing

7:15 AM — The Morning Intelligence Briefing

The operations center hums with quiet activity. Unlike traditional control rooms with operators monitoring individual machines, this space resembles a collaborative mission control. At ergonomic workstations, cross-functional specialists monitor digital representations of the entire production ecosystem—from supplier inventory levels to real-time equipment performance and customer fulfillment metrics.

"Morning, team," Maria begins, taking her position at the central station. "Let's review the agent alerts."

Jake Winters, a former process engineer turned Intelligent Systems Specialist, brings up the first alert on the main display. What separated Jake from traditional IT personnel was his deep knowledge of both manufacturing processes and advanced analytics—a hybrid expertise that had become essential to modern operations.

"We have three situations requiring human review," he explains.

Titanium Shipment Delay — predictive models showed 68–84% likelihood of delay, with potential impact on aerospace production schedules.

Composite Batch Inconsistency — vision systems detected subtle variations in material properties; production was automatically paused pending human decision.

Prototype Request — A major customer, VectorSpace Dynamics, submitted a request that exceeded automated approval parameters.

Maria studies the notifications with experienced eyes. What strikes outsiders most about Aether Dynamics' approach isn't how much the intelligent systems can do—it's what they're deliberately prevented from doing without human validation.

"The system architecture performed exactly as designed," Jake notes. "All three situations hit human review thresholds defined in the governance framework. No autonomous action was taken."

This wasn't the result of technological limitation. It was intentional design—the intelligence systems could have made these decisions, but the architecture explicitly required human judgment for certain classes of decisions based on impact, risk, and precedent.

Maria pulls up the shipment advisory first. "What's our supply chain agent's assessment?"

The detailed report appears on-screen. The system has continuously monitored thousands of data points—shipping manifests, weather patterns, historical performance of this supplier, global logistics disruptions, inventory levels, and production schedules. The agent hasn't just identified a potential problem; it's calculated likely impact scenarios and generated mitigation options.

"Let's go deeper on this one," Maria says. "The procurement team needs to be looped in immediately."

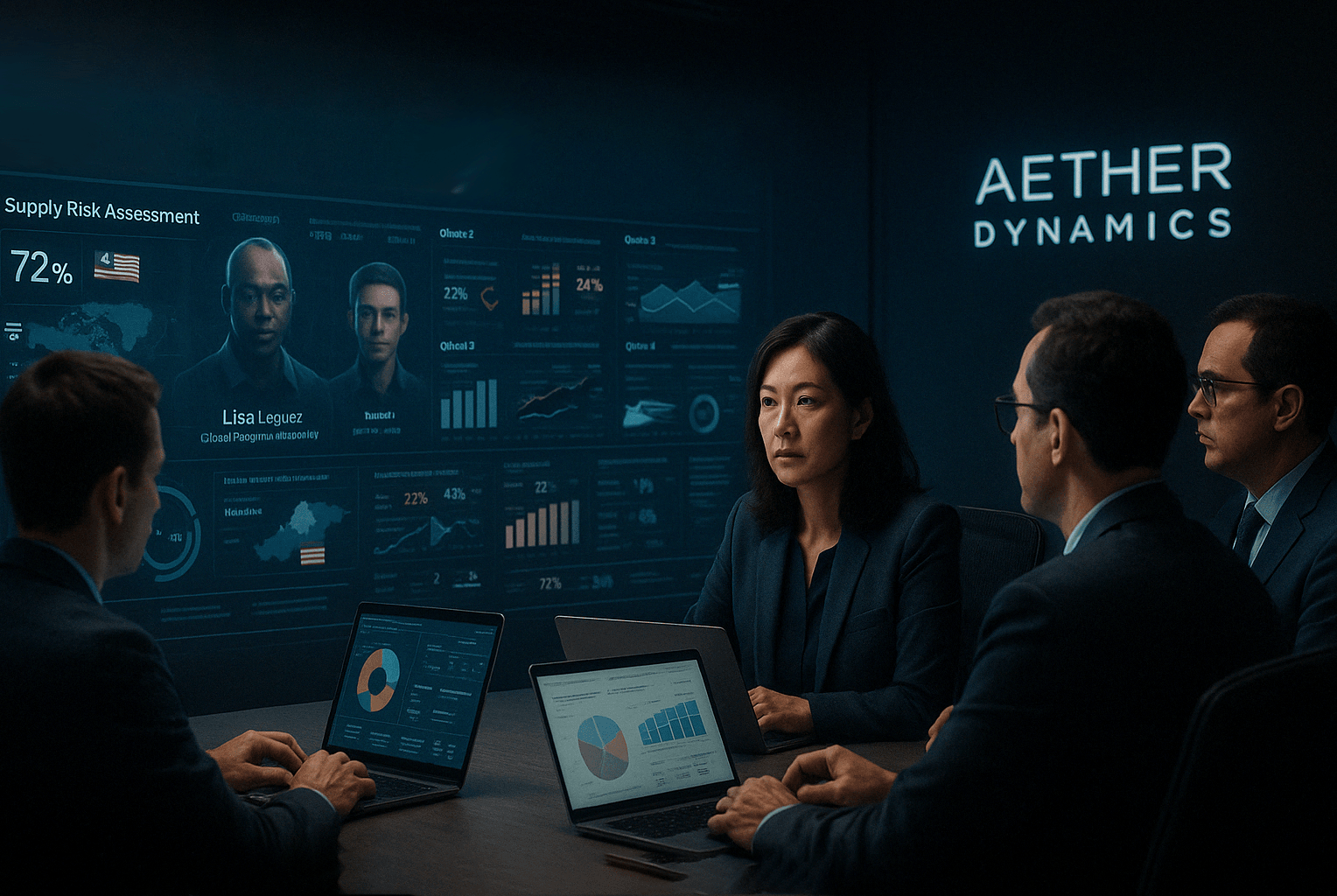

When Humans & AI Agents Converge

8:30 AM — When Humans and AI Agents Converge

The procurement team joins virtually, their avatars appearing on the collaboration wall. Lisa Rodriguez, Global Procurement Director, speaks first.

"We've reviewed the supply chain agent's assessment. The titanium shipment delay from our Malaysian supplier has a 72% probability based on port congestion patterns. The system flagged this because it exceeds our 65% risk threshold for critical materials."

"What options has the system identified?" Maria asks.

"Three viable alternatives," Lisa explains, "each with full impact analysis. Option one activates our domestic backup supplier at a 12% cost premium but guarantees delivery within schedule parameters. Option two redistributes production scheduling to prioritize components not requiring titanium, creating a three-day delay for the affected product lines. Option three utilizes an alternative alloy with 89% similar properties, requiring engineering validation."

The room falls silent as everyone reviews the detailed modeling for each scenario displayed on their screens. This is where human judgment becomes irreplaceable—weighing not just data, but relationships, reputation, and nuanced business priorities.

"The cost modeling here is incomplete," says Ramon Diaz, Finance Controller. "Option one shows the direct premium for domestic materials, but doesn't factor in the lifetime value of the affected customer contracts or the competitive implications."

Maria nods. "Good catch. Jake, can you update the parameters to include contract value and market share metrics in the analysis?"

Jake's fingers move across his screen, adjusting the model parameters. Within seconds, the projections update, showing a significantly different risk-reward profile.

"That changes things," Maria observes. "With the updated parameters, option one shows the highest total value despite the premium. Let's proceed with domestic sourcing."

Lisa makes the final call: "I'll initiate procurement from Allied Materials and notify the supplier."

What impresses Maria most is how quickly Jake could modify the analysis parameters. "Remember when a change like that would have taken days? When we'd need IT to modify hardcoded rules in three different systems?"

Jake nods. "Our old architecture was so rigid. Every component was a black box connected by custom interfaces. When regulations or business needs changed, we'd need to rebuild everything."

"That composable architecture decision we made in 2027 was pivotal," Maria reflects. "Being able to plug in new data sources, swap out analytical models, or update decision parameters without disrupting the entire system... that's what kept us competitive while competitors struggled with technology debt."

"Speaking of competitors," Lisa adds, "did you see the news about ApexSurge Manufacturing? They've filed for Chapter 11."

"ApexSurge?" Ramon looks surprised. "They were an early AI adopter, weren't they?"

Lisa nods grimly. "They went all-in on point solutions. Dozens of disconnected AI applications from different vendors. Each worked initially, but they couldn't scale or adapt when conditions changed. When they finally tried to integrate everything and add proper governance, the cost was astronomical. They simply couldn't afford the retrofit."

This seamless interaction between human expertise and intelligent systems exemplifies why Aether Dynamics survived when competitors failed. The technology didn't replace human judgment—it amplified it by handling data processing at scale while preserving human decision authority for strategically important choices.

Crisis Averted: AI Vision Catches What Humans Can't See

9:45 AM — Crisis Averted: AI Vision Catches What Humans Can't See

Sophia Williams, Quality Systems Director, walks briskly into the operations center, her specialized knowledge summoned by the composite materials anomaly.

"The materials inconsistency is fascinating," she begins, pulling up microscopic scans on the main display. "Our vision system caught something so subtle it would have been impossible to detect with traditional methods."

The screens display side-by-side comparisons of normal material composition and the flagged anomaly—differences virtually invisible to the human eye but clearly identified by the vision system's neural networks.

"Polymer distribution variance of less than 0.3% from baseline," Sophia explains. "But our simulation models predict this would cause a 22% reduction in tensile strength under specific stress conditions."

Jake adds, "The production line halted automatically when the confidence level of the anomaly reached 92%—above our 90% threshold for safety-critical materials. The system rejected the batch but requires human confirmation before resuming production."

"What's causing it?" Maria asks.

"That's where human expertise came in," Sophia explains with evident pride. "The pattern recognition agent flagged similarities to a 2028 case, but couldn't establish causality with sufficient confidence. Our materials team investigated and discovered the root cause—our supplier changed a secondary processing parameter without notification. The change falls within their contractual specifications but interacts unexpectedly with our specific application."

Maria considers the implications. "If this had gone undetected..."

"Component failure after approximately 1,200 operational hours," Sophia finishes. "Outside our accelerated testing window, but well within the product lifecycle. We'd be looking at a field failure and potential recall."

"Potential cost impact?" Maria asks.

Ramon, always ready with financial implications, responds: "$14.2 million in direct recall costs plus incalculable reputational damage. The early detection system just paid for its entire five-year investment in one catch."

"Although we should note," Sophia adds with a slight grimace, "this is actually the third similar alert this month. The previous two were false positives—one from an incorrect calibration on the vision system, another from a temporary atmospheric anomaly during processing. We spent about forty engineering hours investigating those."

"Worth it," Maria states firmly. "Even if 90% of these alerts turn out to be nothing, that 10% justifies the process."

Ramon's expression suggests he's not entirely convinced. "The finance team has a different view on that efficiency ratio. Those engineering hours add up."

"I remember when we didn't have these capabilities," Maria responds. "Remember the 2025 recall? Twenty-three million dollars and we nearly lost our largest aerospace customer. I'll take a few false positives over another disaster like that."

Jake, sensing tension, redirects the conversation. "Have manufacturing and supply chain agents recalculated production impact?"

"Full impact assessment complete," he confirms, checking his display. "We have a 36-hour production gap while replacement materials arrive. The system has automatically generated a revised production schedule to minimize customer impact, but it's awaiting approval since it affects delivery commitments."

Maria studies the proposed schedule adjustments. "The system's recommendation makes sense, but involves breaking our delivery promise to FusionSky Aerospace. Get their buyer on a call—we need to negotiate terms for the delay rather than letting an automated system make that decision."

This exemplifies another core principle of Aether Dynamics' governance framework: customer relationship decisions always require human judgment, regardless of how logical the system's recommendation might be.

The Boundaries of Trust: When AI Agents Stop Themselves

11:15 AM — The Boundaries of Trust: When AI Agents Stop Themselves

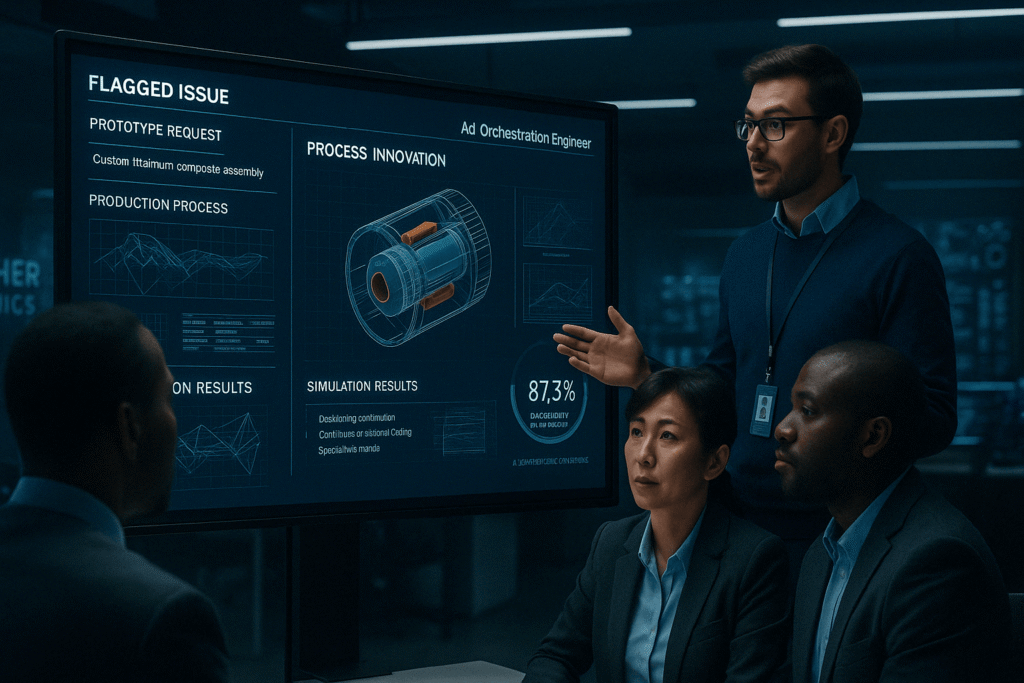

Alex Rodriguez, Lead AI Orchestration Engineer, presents the third flagged issue: the prototype request from VectorSpace Dynamics.

"This one's particularly interesting," he begins. "VectorSpace Dynamics has requested a custom titanium-composite assembly with specifications that deviate from our standard parameters. Our Process Innovation Agent developed a novel manufacturing method that theoretically satisfies their requirements—but the approach has no production history."

Alex projects the digitally modeled production process. It's elegant and inventive—a new approach that would reduce material waste by 34% while meeting the unusual specifications.

"The Process Agent developed this entirely on its own?" Maria asks.

"Yes and no," Alex explains. "It leveraged our manufacturing simulation environment to run over 15,000 virtual tests, combining techniques from three different existing processes in our knowledge base. The approach is innovative but grounded in proven methods."

"So why did it trigger human review?" Maria probes.

"Two reasons," Alex says. "First, the process requires equipment reconfiguration beyond autonomous approval thresholds. Second, and more importantly, the system's confidence in long-term durability is 87.3%—below our 95% threshold for aerospace components."

Maria studies the simulation results. "This is exactly what the governance framework was designed to catch. The solution is clever, but we're not betting our reputation on clever without rigorous validation."

"Agreed," Alex says. "We could run accelerated physical testing on the process, but that will take three weeks."

Maria considers the options. "Let's take a hybrid approach. Contact VectorSpace Dynamics and offer two paths: standard production methods with a longer lead time, or the innovative approach with extended testing windows and special warranty terms."

As Alex prepares to contact the customer, he pauses. "You know, I attended that global manufacturing conference last month. It was eye-opening to see how different regions are approaching this technology. European manufacturers are all-in on governance frameworks like ours. Asian producers are focused on maximum automation with less human oversight. And most North American companies are somewhere in the middle."

"And the results?" Maria asks.

"The data speaks for itself. European companies have fewer incidents and higher customer trust. Some Asian manufacturers have impressive throughput numbers but are dealing with quality issues and employee resistance. The North American middle-ground approach is creating a two-tier industry—those with proper frameworks pulling ahead, others falling behind."

"It's not just regional," Maria adds. "We're seeing the same pattern in our industry. Remember when FrontierEdge Technologies tried to poach you last year? Their approach was to throw AI at everything without a cohesive architecture. I heard they've spent the past eighteen months trying to untangle their systems."

Alex nods. "Three of their former engineers just joined us. They told me it was a nightmare—dozens of black-box AI solutions from different vendors that couldn't communicate with each other. When regulations changed, they had to manually update each system independently."

This approach—using technology to innovate while maintaining human control over risk decisions—exemplifies Aether Dynamics' balanced framework. The system suggests, but humans decide how much risk is acceptable, especially when customer relationships are at stake.

Safety Has a Price: The Boardroom Debate - Generated by Open AI ChatGPT 4.0

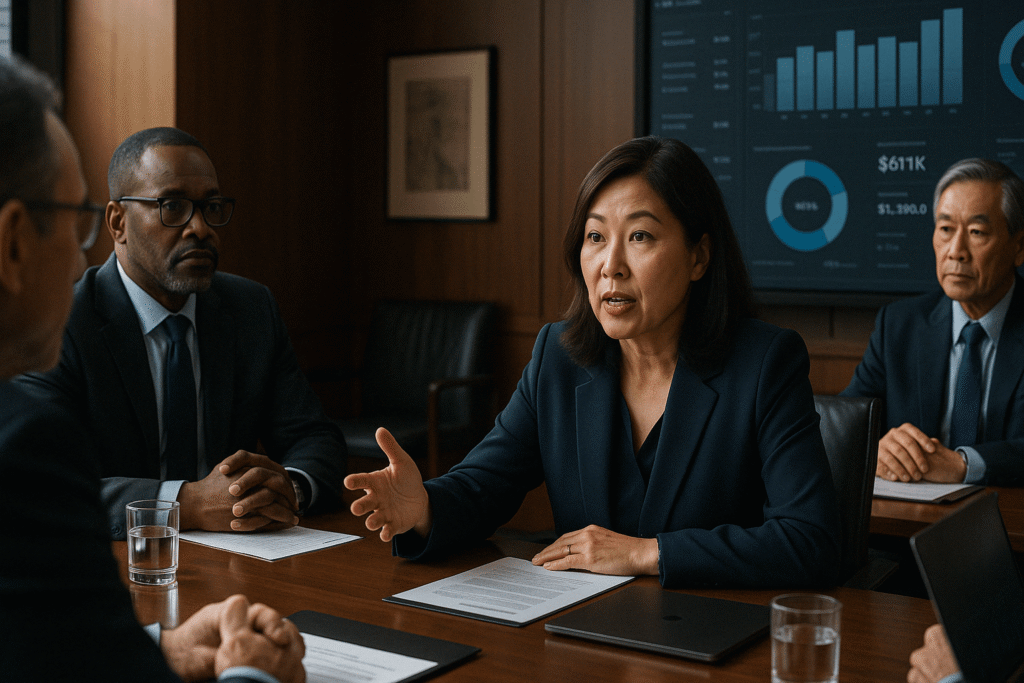

1:30 PM — Safety Has a Price: The Boardroom Debate

The executive conference room offers a stark contrast to the operations center—quiet, wood-paneled, and designed for strategic discussions rather than tactical operations. Today's quarterly business review has brought together the company's senior leadership, including Eleanor Reed, CFO, and James Nakamura, CEO.

Eleanor cuts straight to a controversial point: "Our governance framework is becoming increasingly expensive. Every time an AI agent pauses for human review, we incur opportunity costs. Last quarter, we had 342 human review events, a 22% increase over the previous quarter. That's 342 delays in our operation."

The financial presentation on-screen shows the metrics in stark clarity: the average human review process takes 47 minutes and costs approximately $1,200 in direct and indirect expenses.

"Nearly half a million dollars in review costs alone," Eleanor continues. "Several of our competitors have moved to fully autonomous decision models with minimal human oversight. They're claiming 15% greater operational efficiency."

Maria has heard this argument before, and she's prepared. "I understand the financial concern, but let's look at the complete picture."

She pulls up her own presentation. "Our competitors may have fewer pauses, but they're experiencing different costs. VistaRise Industries had two major quality incidents last quarter—cost impact of $23 million. Stratosyn Technologies reduced their governance thresholds in their Singapore facility and experienced a 34% increase in customer returns."

"To be fair," Eleanor counters, "VistaRise and Stratosyn aren't the only comparisons. MetricPrime Solutions has implemented a more autonomous framework with fewer review gates, and their efficiency metrics are impressive. Their approach delivered early wins—they were ahead of us in throughput optimization for the first eighteen months."

Maria nods, acknowledging the point. "You're right. MetricPrime initially outpaced us in deployment speed and early metrics. I'll admit I envied their quarterly reports during our buildout phase. But there's a critical difference in architecture. They built point solutions that couldn't scale. Now they're spending more fixing integration issues than on new capabilities."

She switches to another slide. "Our customer retention rate is 97.8%, compared to the industry average of 81.3%. Our insurance premiums are 22% below industry average due to our documented risk management processes. And most importantly, we haven't had a major recall or safety incident in 18 months."

Victor Chen, Chief Technology Officer, joins the discussion. "There's another critical factor to consider. Our implementation timeline was three years from concept to full deployment. If a competitor started today, they wouldn't see complete results until 2033. The technology gap is widening exponentially."

Eleanor remains skeptical. "But these governance review costs keep increasing. At what point does the pendulum swing too far toward caution?"

"Actually," Maria counters, "our data shows something interesting. During the first year post-implementation, human reviews increased monthly as the system learned. The second year, they plateaued. Now in year three, despite handling 40% more production volume, the absolute number of necessary human interventions is beginning to decline as the system builds a reliable experience base."

"And let's be clear about the alternative," Victor adds with unusual intensity. "I just had lunch with my counterpart at FluxPrecision Dynamics. They took the 'retrofit' approach—implementing dozens of AI point solutions first and trying to add governance later. They're now spending 4.5 times what our implementation cost to untangle the mess. Some capabilities they simply can't govern properly and had to disable entirely."

Eleanor considers this. "I'm not suggesting we abandon governance. But we should evaluate where we can raise some of these thresholds. Perhaps not all decisions need the same level of oversight."

"That's actually a reasonable approach," Maria concedes, surprising some around the table. "We could stratify the governance thresholds based on risk categories. For Category 3 decisions—those with minimal safety or financial implications—we could raise the automation threshold and reduce human reviews."

James Nakamura, who has been listening quietly, speaks up. "I appreciate both perspectives. Eleanor is right to challenge our efficiency, and Maria is right to defend our governance framework. Let's take this hybrid approach: optimize thresholds where appropriate, but maintain our core principles. Our framework isn't just a cost center—it's become a competitive differentiator. Customers are increasingly asking about our AI governance processes during contract negotiations."

The conversation shifts from cost-cutting versus safety to a more nuanced optimization discussion. By the meeting's end, the board approves a targeted refinement of governance thresholds for lower-risk decisions while maintaining strict controls on critical operations. They also approve funding to improve the efficiency of human review processes—enabling faster expert response for the reviews that remain essential.

This meeting represents a key inflection point in manufacturing's evolution: recognizing that AI governance isn't merely regulatory compliance or risk management—it's a strategic business asset that builds customer trust and long-term stability when implemented with nuance rather than absolutes.

The Early Warning: Predictive Maintenance in Action - Generated by Open AI ChatGPT 4.0

3:45 PM — The Early Warning: Predictive Maintenance in Action

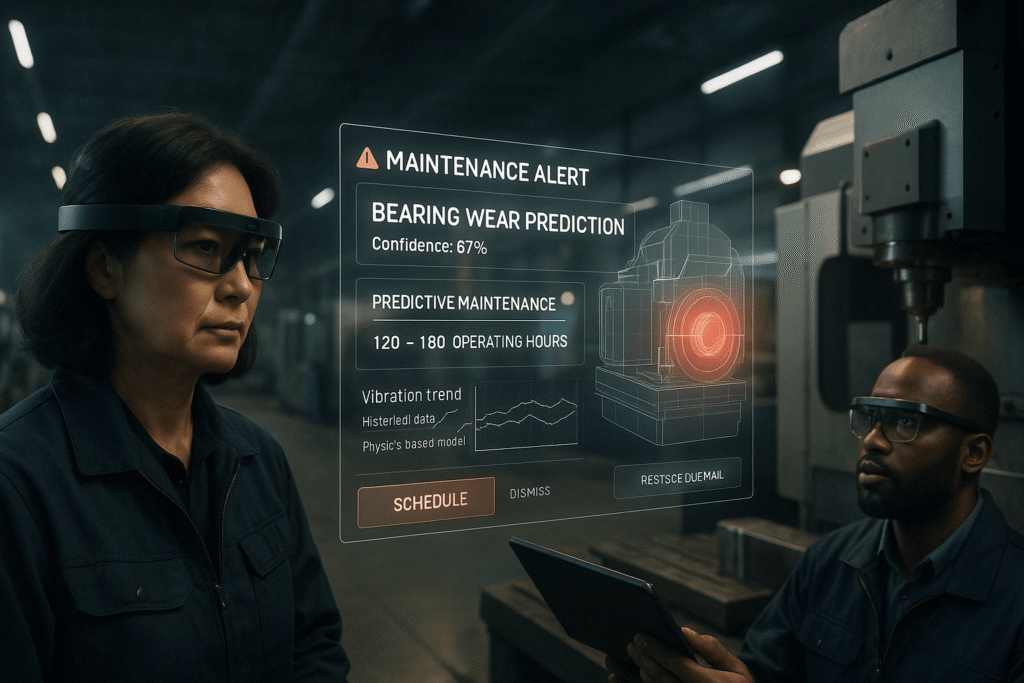

Walking through the production area, Maria and Jake receive an alert on their augmented reality displays. The vibration analysis agent has detected an anomaly in a critical CNC machining center—subtle changes in operational patterns that human senses would never detect.

"Bearing wear prediction on Line 8," Jake notes, pulling up the detailed analysis. "The system detected microscopic changes in vibration patterns consistent with early-stage bearing degradation."

Maria reviews the alert details. "Confidence level?"

"87%," Jake responds. "Below the 90% threshold for autonomous maintenance dispatch, but above the 85% notification threshold."

The predictive maintenance system shows a detailed analysis: based on historical patterns and physics-based modeling, the specific bearing will likely fail within 120-180 operational hours. The system has automatically calculated the optimal maintenance window based on production schedules and part availability.

"What's the system's recommendation?" Maria asks.

"Replacement during the already-scheduled weekend maintenance cycle," Jake explains. "The part is in inventory, and the predicted failure window extends beyond the planned downtime. No production impact expected if addressed within the recommended timeframe."

This represents a perfect balance—the system has identified a potential issue well before it becomes critical, proposed a solution that minimizes operational disruption, but left the final decision to human experts who can consider factors beyond the algorithm's parameters.

"Approve the recommendation," Maria decides. "But let's adjust the monitoring frequency—I want vibration analysis every two hours instead of the standard six-hour interval, just to be safe."

Jake makes the adjustment, demonstrating another key principle: humans can easily modify system parameters based on experience or intuition, with the technology adapting to human guidance rather than the reverse.

This approach to maintenance has transformed Aether Dynamics' operations. Five years ago, maintenance was largely reactive—responding to failures after they occurred. Then came condition-based monitoring, which detected problems in their early stages. Now, their predictive system identifies potential failures weeks in advance, enabling perfectly timed interventions that maximize equipment lifespan and minimize disruption.

The financial impact has been substantial: unplanned downtime reduced by 78%, maintenance costs decreased by 32%, and equipment lifespan extended by an average of 4.2 years.

The Midnight Crisis: When Systems Face the Unknown - Generated by Open AI ChatGPT 4.0

5:30 PM — The Midnight Crisis: When Systems Face the Unknown

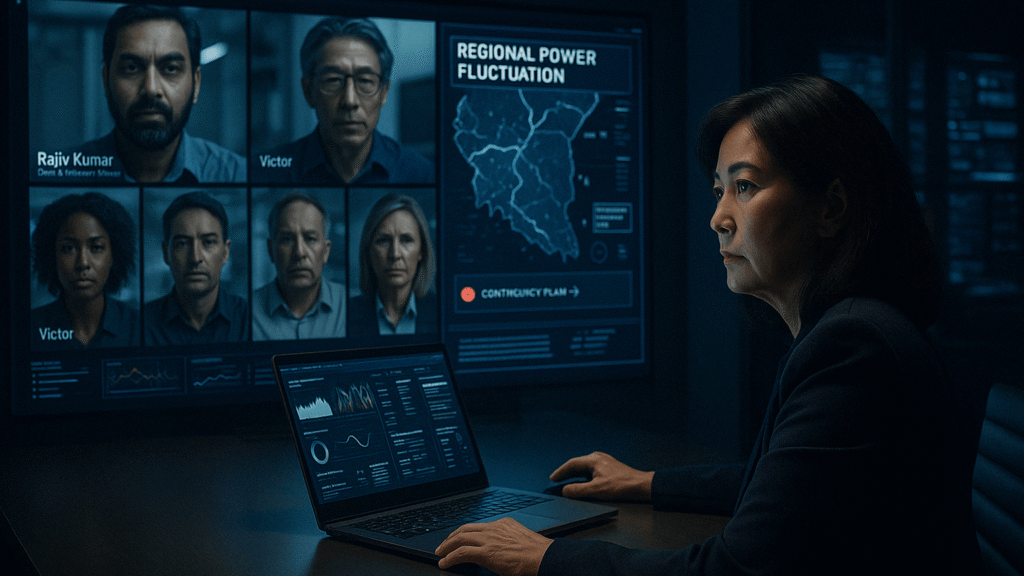

As Maria prepares to leave for the day, an urgent alert reaches her phone. The offshore production facility in Malaysia is experiencing an unexpected situation—a massive regional power fluctuation is causing intermittent system failures.

"Conference call in five minutes," she messages the emergency response team.

On the video wall, faces appear one by one: the Malaysian plant manager, system engineers, and key department heads. The situation is tense but controlled.

"Status report," Maria requests.

Rajiv Kumar, Malaysia Operations Director, responds: "Regional power grid experiencing cascading failures due to severe weather. Our backup generators engaged properly, but we're seeing voltage fluctuations beyond normal parameters. The control system detected anomalous patterns and initiated safe mode protocols."

"Current production impact?" Maria asks.

"All critical operations automatically paused," Rajiv explains. "The system correctly classified this as a 'novel environment' condition—a situation without sufficient historical data to make high-confidence decisions. It maintained essential systems but suspended automation that could be affected by power instability."

This represents one of the most sophisticated aspects of their governance framework: the ability to recognize when conditions exceed the system's experience base and default to safe operation rather than making potentially dangerous decisions with low confidence.

"The system identified three potential responses," Rajiv continues, "but none met the autonomous execution threshold due to insufficient precedent. Human direction required."

Maria studies the options. "Option two makes the most sense given the circumstances. Implement regional isolation protocols and maintain essential operations on generator power. Notify affected customers according to the supply chain contingency plan."

"I disagree," Victor interrupts, joining the call late. "Option three offers higher throughput potential if the grid stabilizes quickly, which meteorological data suggests it will. The lost production value if we're too conservative could exceed $300,000."

"That's speculation," Maria counters. "Option three assumes grid stability within two hours, but there's no historical precedent for that in this region during monsoon season."

"The production loss is guaranteed with option two," Victor pushes back. "With option three, it's only a risk, not a certainty."

The team watches the exchange with tense expressions. Maria weighs the options, aware that senior leadership is divided. It's a moment that requires decisive judgment, not consensus.

"We're proceeding with option two," she states firmly. "The risk profile of option three exceeds our safety parameters. I'd rather explain a production shortfall to the board than a safety incident."

Victor's expression shows disagreement, but he doesn't contradict her decision. "Noted. I'll update the executive team on the projected production impact."

"Executing now," Rajiv confirms, clearly relieved to have a clear direction.

Later, as the crisis stabilizes, Maria receives data confirming her decision was correct—the regional grid instability lasted nearly seven hours, far longer than the two-hour maximum option three could have safely handled.

This incident highlights perhaps the most important aspect of Aether Dynamics' approach: the system knows what it doesn't know. Rather than making increasingly risky decisions in unfamiliar territory—as purely autonomous systems might—it recognizes the boundaries of its competence and engages human expertise when facing novel conditions.

As the emergency response plan activates, Maria reflects on how far they've come. Five years ago, such an incident would have caused panic and potentially catastrophic decisions. Today, it's handled with calm efficiency—the technology managing what it does best, humans providing judgment where needed, and clear protocols guiding their collaboration.

The Reflection: Not Just Smart, But Wise - Generated by OpenAI ChatGPT 4.0

7:15 PM — The Reflection: Not Just Smart, But Wise

After resolving the Malaysia situation, Maria stands at the observation window overlooking the now-quiet production floor. The contrast with factories of the past is striking—gone is the chaotic noise and frantic activity, replaced by the quiet precision of automated systems working in harmony with human specialists.

Jake joins her at the window. "Quite a day," he observes.

Maria nods. "Three years ago, any one of these situations could have derailed us. Now it's just another Tuesday."

"The Malaysia incident was a good test of the system architecture," Jake notes. "The recognition of uncertainty and escalation to human decision-makers worked exactly as designed."

"That's what separates us from competitors," Maria reflects. "Their systems try to be autonomous at all costs. Ours know when to ask for help."

"Although," she adds with a wry smile, "I suspect Victor is still fuming about my call. He's been pushing for more aggressive thresholds since day one."

"Risk tolerance is personal," Jake observes. "But the data supported your decision."

As if on cue, her phone buzzes with a notification. VectorSpace Dynamics has responded to their proposal regarding the prototype component. They've chosen the path that involves human validation and testing—accepting a longer timeline in exchange for greater confidence.

"Customers are starting to understand," she tells Jake. "They don't just want AI—they want governed AI. They're willing to pay a premium for systems that balance automation with judgment."

Jake gestures toward the production floor. "We learned the hard way back in '26. Sometimes the most important capability isn't what the system can do—it's what it's prevented from doing."

Maria smiles at the memory of how far they've come. "That incident nearly ended my career. Now it's a case study at engineering schools."

She thinks about the quarterly management meeting earlier that day. "You know what keeps me up at night? Not our system, but thinking about what would have happened if we'd waited. If we'd delayed another two years before implementing our architecture."

Jake shudders visibly. "We'd still be attempting to integrate everything. Probably would have missed the FDA's adaptive manufacturing compliance deadline last year."

"Exactly," Maria says. "When I talk to colleagues at other companies, I keep hearing the same story. Those who started the journey five years ago are thriving. Those who waited are now facing impossible choices—massive investment to catch up, or slow decline into irrelevance."

"The window of opportunity is closing," Jake agrees. "The cost of starting now versus three years ago has probably tripled. And the gap between leaders and followers keeps widening."

"That's what I try to explain to other executives," Maria says. "This isn't about being first with new technology—it's about avoiding being last. By 2035, there won't be viable manufacturing companies without properly governed, composable systems. It's become an existential choice."

The two stand in comfortable silence, watching the choreographed movements of robotic systems below. The facility isn't just efficient—it's resilient. It doesn't just process materials—it continuously learns and adapts, always within carefully designed parameters that keep humans at the center of critical decisions.

As she finally heads home, Maria reflects on the decisions that led to today's success. The crucial choice wasn't whether to adopt AI—everyone was doing that. It was how they implemented it: building a flexible, composable architecture with governance built in from the ground up, rather than bolting it on later. Choosing a unified platform approach rather than dozens of disconnected point solutions. Prioritizing adaptability over short-term efficiency.

She's confident in one thing: whatever comes next in the evolution of industrial intelligence, the difference between success and failure won't be found in the technology itself, but in how wisely it's governed. Not just in what it can do, but in what it should do.

Some leaders know that. Others are still trying to stitch together dashboards and hoping for the best.

Which are you?

This story integrates industry research, credible forecasts and current events shaping the future of AI-augmented manufacturing - to learn more please see our citation footnotes

Economic & Trade ImpactManufacturing resilience has become a strategic priority after repeated supply chain disruptions, with 76% of manufacturers increasing technology investments to enhance flexibility and reduce operational vulnerabilities.

↳ [1] Economic Policy Uncertainty Index, U.S. trade tariffs impact (2025)

Technological ConvergenceIntegration of spatial computing, self-optimizing production networks, and AI-powered monitoring systems has created manufacturing environments that continuously adapt to changing conditions while maintaining strict governance controls.

↳ [2] Accenture’s AI Refinery™, NVIDIA’s Project GR00T, Microsoft’s Azure GPT-5 copilots (2025 launches) ↳ [5] BMW’s spatial computing integration for rapid line reconfiguration (2025 demonstration)

Workforce TransformationThe evolution of roles like Cross-Domain Orchestrators, Digital Twin Specialists, and AI Systems Engineers reflects the emerging skills gap, with 2.1 million manufacturing positions projected to remain unfilled by 2030 due to skill mismatches.

↳ [3] Deloitte’s projection (2030) ↳ [6] GE Aerospace augmented workforce initiatives

AI Governance FrameworksRegulatory requirements and industry best practices increasingly mandate structured governance for high-risk AI systems, with real-time monitoring and human override capabilities becoming standard practice in safety-critical manufacturing processes.

↳ [4] EU Artificial Intelligence Act & U.S. Executive Order 14124 (2025)

Digital Twin EvolutionThe progression from individual machine twins to fully interconnected, factory-wide digital ecosystems enables unprecedented visibility and predictive capabilities while requiring sophisticated oversight mechanisms.

↳ [7] Boeing blockchain-secured AI-managed distributed manufacturing network (2025)

Multi-Agent SystemsSpecialized AI agents for different operational domains (supply chain, quality control, production) provide targeted intelligence while operating within a unified governance framework.

↳ [9] Van Schalkwyk, P. (2025). “Bounded Autonomy: A Pragmatic Response to Concerns About Fully Autonomous AI Agents”, The Digital Engineer Blog

Industry ProjectionsManufacturers implementing governed AI systems report OEE improvements from 60% to 85%+, defect rate reductions of up to 90%, and energy efficiency gains of 30–50%, while maintaining superior safety records.

↳ [8] Bain & Company, McKinsey & Capgemini reports (2024–2025) ↳ [10] Okuyelu, A. & Adaji, I. (2024). “AI-Driven Manufacturing: The Future Unveiled”, Elicit Intelligence Research Group