Authored by Wouter Beneke, Marketing Lead at XMPro

The launch of Claude Cowork on January 12th has been widely celebrated.

Anthropic describes it as "Claude Code for the rest of your work." In practical terms, it means that anyone—not just developers—can now point an AI at a folder on their desktop, describe what they want done, and watch it execute. Organise files. Extract data from receipts into spreadsheets. Draft reports from scattered notes. Create, edit, delete. Autonomous execution.

The product was reportedly built in a week and a half, largely by Claude Code itself.

That alone should tell you something about where we are.

But the most important thing about Claude Cowork is not what it can do. It is what it reveals about what breaks next.

We are no longer in a world where building is constrained by who can code. We are in a world where execution is abundant, friction is collapsing, and the organisational structures designed to manage scarcity are about to be overwhelmed by surplus.

The question is no longer: Can we build faster?

The question is: Can we absorb what gets built?

Most enterprises are not ready for the answer.

The Real Shift: From Coding Scarcity to Execution Abundance

For two decades, enterprises were protected from certain classes of failure by a simple constraint: not everyone could build.

Coding skill was limited. Deployment required coordination. Architectural decisions were slow—sometimes painfully so. Even poorly designed systems took time to materialise because execution itself was hard.

Tools like Claude Cowork remove that friction.

Cowork removes the interface barriers that kept agentic tools mostly in developer workflows. The command line disappears. The environment is configured. The user describes outcomes. The agent plans and executes.

This is not AI assisting developers. This is code democratization in its most literal form.

But here is what most commentary misses: the definition of "code" has quietly expanded.

When a marketing analyst points Claude at a folder of screenshots and asks it to generate an expense spreadsheet, they are not "coding" in any traditional sense. They are not writing Python or configuring APIs. But they are creating executable logic—conditional behaviour, data transformations, decision rules—that operates on production data and produces real outputs.

They are programming without knowing it.

Enterprises used to scale systems. Now they are scaling logic. The governance model has not caught up.

When coding skill was scarce, damage was contained. Bad design choices took time to accumulate. Architectural drift happened slowly enough that it could sometimes be corrected.

Now, the friction is gone. Anyone can build. And anyone will.

What Actually Happens When Everyone Builds Their Own Solution

Let's move past hypotheticals.

When teams are empowered to build their own solutions quickly, a predictable pattern emerges—one that has played out in every enterprise that has lived through a technology democratization wave.

Here is what it looks like in practice:

Multiple teams identify the same underlying problem. Asset downtime. Operational risk. Planning inefficiencies. Quality deviations. Compliance reporting. Customer churn prediction.

Each team builds a solution that works for them. One team uses Claude to create a monitoring spreadsheet. Another builds an alerting workflow. A third generates a reporting template. A fourth automates a data extraction process.

At first, this looks like progress. Bottlenecks disappear. Teams move faster. Local KPIs improve. Innovation feels alive. Leadership sees velocity and celebrates it.

Then the cracks form.

Not immediately. Not obviously. But steadily.

A head of operations at a mining company described a familiar pattern. Five different teams were calculating "equipment availability" from the same underlying asset data. All five calculations were defensible. All five were different. When leadership asked a simple question—"What is our current fleet availability?"—they received five answers, a two-hour meeting, and no resolution.

The issue is not that fifty teams built fifty tools.

The issue is that they also built fifty interpretations of reality.

Fifty definitions of "downtime." Fifty thresholds for what constitutes "risk." Fifty ways to classify an anomaly. Fifty alerting philosophies, each reasonable in isolation. Fifty sets of hardcoded assumptions about data frequency, data quality, operating conditions, and decision logic.

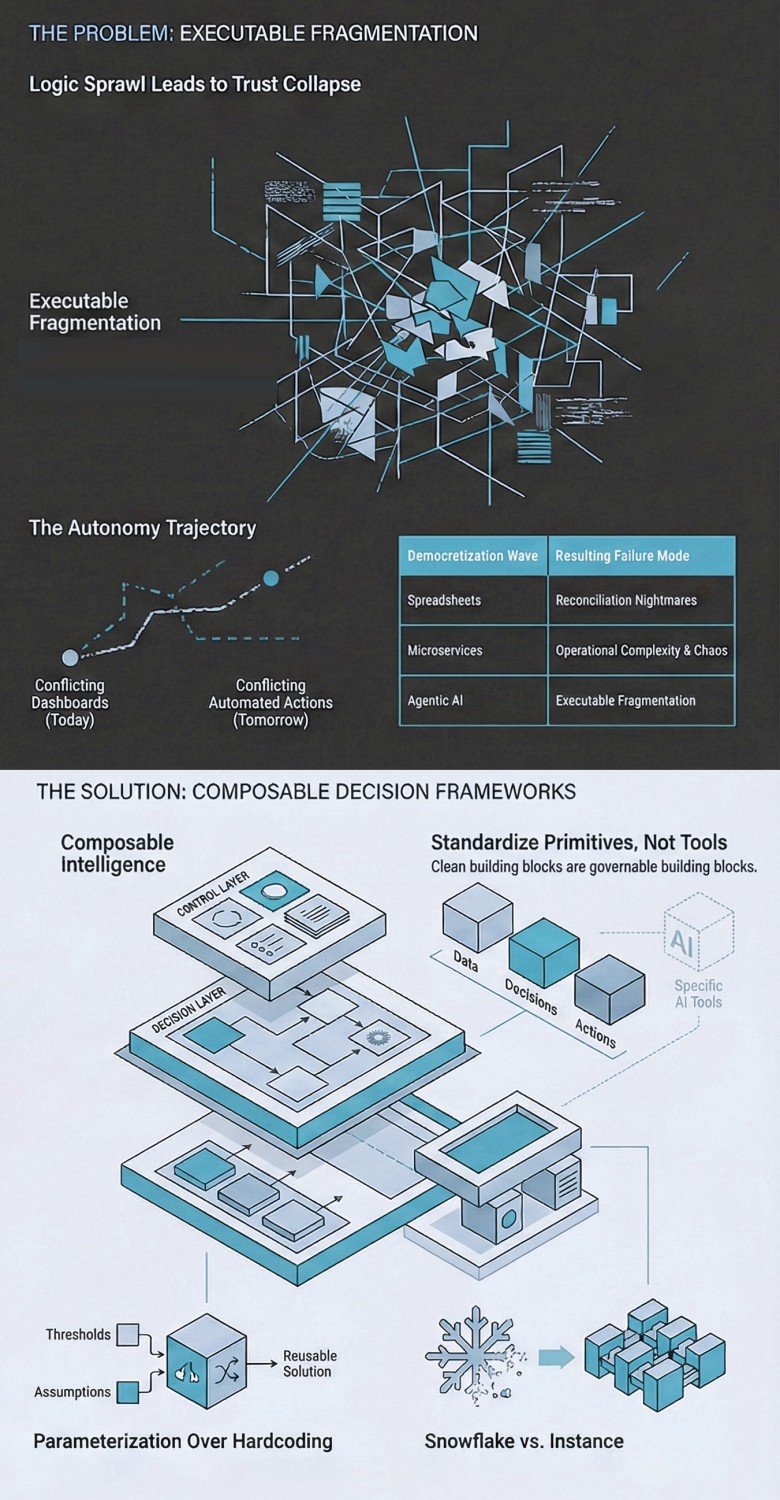

This is executable fragmentation... the proliferation of logic that works locally and conflicts globally.

None of this is malicious. Most of it is well-intentioned. Each solution genuinely works for the team that built it.

But over time, the organisation loses a shared operational language.

Dashboards don't disagree because the data is wrong.

They disagree because the semantics have drifted.

From Data Sprawl to Decision Trust Collapse

When executable fragmentation takes hold, leadership often focuses on the visible symptom: data sprawl.

Too many pipelines. Too many transformations. Too many tools. Too many copies of the same data living in different places.

That is real, but it is secondary.

The deeper issue is decision trust.

Consider what happens when an executive asks a seemingly simple question:

"Are we operating within acceptable risk right now?"

And receives three different answers depending on which system they consult.

Something breaks. Not technically. Organisationally.

At that moment, data-driven decision-making quietly degrades into:

- Manual overrides

- Cross-checking between systems

- Executive intuition

- Meetings to reconcile metrics instead of acting on them

The organisation now has more data than ever, and less confidence than before.

This is the hidden cost of uncoordinated building. It is not that bad software gets created. It is that trust erodes so gradually that no one can point to the moment it happened.

By the time leadership notices, the dysfunction is structural.

The progression is predictable: logic sprawl leads to trust collapse, and trust collapse sets the stage for action conflict.

Local Winners, Enterprise-Level Dysfunction

Here is the uncomfortable truth that most internal narratives obscure.

From inside each team, the story still looks positive.

Their solution works. Their metrics improved. Their automation delivered value. They shipped faster than the central IT team could have. They solved their own problem.

They are not wrong.

But enterprises do not fail because teams underperform. They fail because local optimisation produces global incoherence.

When decisions span multiple functions, multiple sites, or multiple time horizons, these locally rational systems begin to conflict with each other.

The asset management team's definition of "critical failure" does not match the maintenance team's definition. The operations dashboard shows green while the finance dashboard shows red. The compliance report contradicts the safety report because they were built from different source assumptions.

No one owns the whole. No one can see end-to-end behaviour. No one can explain why the organisation feels slower despite all the automation.

This is not a failure of technology. It is a failure of architecture.

And architecture, in this context, is not a diagram on a slide. It is the set of constraints that determine whether local decisions compound into organisational intelligence or fragment into organisational chaos.

Why This Always Ends Badly: The Historical Pattern

We have seen this movie before.

Spreadsheets empowered teams and destroyed reconciliation. Every finance department has lived through the nightmare of discovering that five different spreadsheets were calculating the same number five different ways, and no one knew which one was correct.

Early microservices increased agility and collapsed operability. The promise was independent deployment. The reality was distributed complexity, with debugging that required understanding dozens of services and their undocumented interactions.

Shadow IT sped delivery and weakened security. Teams bypassed central IT to move faster, and organisations discovered years later that critical business logic was running on unsanctioned cloud accounts with no backup, no audit trail, and no one who understood how it worked.

Dashboard proliferation improved visibility and produced decision overload. Every team built their own view of the truth, until executives spent more time reconciling dashboards than making decisions.

Each time, the story followed the same arc:

- A tool removes friction

- Adoption explodes

- Local optimisation dominates

- Structure lags

- The organisation pays the price

Code democratization is not different. It is faster.

What used to take years now happens in months. What used to require developers now requires only intent.

The Missing Layer: Composable Decision Frameworks

To be clear, this is not a critique of Claude Cowork. Tools like Cowork are doing exactly what they should: collapsing friction and maximising local productivity. In many cases, the gains are real, immediate, and necessary.

The risk does not come from what these tools enable individuals to do. It comes from what organisations fail to put in place around them.

What is absent from most conversations about tools like Claude Cowork is the system layer they operate within.

Not governance as a policy document. Not architecture as a slide deck. Not "AI safety" as a vague aspiration.

But a composable decision framework—a governed composition layer—that defines:

- Standard primitives for data, decisions, and actions

- Explicit separation between control and execution

- Parameterisation instead of hardcoded assumptions

- Bounded autonomy with clear scope limits

- Observability that makes behaviour visible

- Lifecycle management for logic, not just code

This layer does not exist to slow teams down. It exists to prevent speed from becoming entropy.

A composable decision framework isn't just about reuse. It's about decision accountability.

When an agent recommends or executes an action, you need to know: what objective it was optimising, what constraints bounded it, what evidence it used, and what would have caused it to choose differently. Without that, automation doesn't scale. It merely accelerates blame.

The distinction matters.

A framework is not bureaucracy. It is the enabling constraint that makes safe speed possible.

Think of it this way: a highway system does not slow down traffic. It enables traffic to move faster than any collection of individual roads could. The rules—lanes, signals, on-ramps, speed limits—are what make coordinated movement possible.

A composable decision framework does the same thing for organisational building.

It does not tell teams what to build. It tells them how to build so that what they create can be absorbed, extended, and governed.

Why Composability Matters More Than Standardisation

Many organisations hear "framework" and think standardisation. They imagine locked-down tooling, approved vendor lists, and central committees that slow everything down.

That is the wrong mental model.

The goal is not to standardise outcomes. It is to standardise building blocks.

Composable systems allow teams to:

- Assemble solutions rapidly from existing primitives

- Reuse logic without rewriting it

- Adapt behaviour via parameters, not code forks

- Extend scope without redesigning from scratch

- Connect to other systems through shared interfaces

Without composability, every solution is a snowflake—unique, fragile, and expensive to maintain.

With composability, every solution is an instance—a configuration of shared components that can be understood, governed, and evolved.

That distinction determines whether intelligence compounds or fragments.

Parameterisation: The Quiet Superpower

Hardcoded logic is where scale goes to die.

Thresholds embedded in scripts. Assumptions baked into workflows. Context implied rather than explicit. Asset IDs written directly into automation logic. Business rules scattered across dozens of files with no single source of truth.

These choices feel efficient early on. They are catastrophic later.

When the business changes—a new asset class, a new regulatory requirement, a new operating context—every hardcoded solution must be individually modified. There is no leverage. There is no learning transfer. Each change is a bespoke project.

Parameterisation changes this equation.

One solution serves many contexts. One model adapts across assets or sites. Learning transfers instead of resets. Automation remains safe as conditions change because the conditions are inputs, not assumptions.

This is how organisations scale intelligence, not just execution.

And it is precisely what tools like Claude Cowork, used without structure, will not do. They will hardcode because hardcoding is locally optimal. They will embed assumptions because embedded assumptions solve the immediate problem faster.

They will create solutions that work beautifully today and become liabilities tomorrow.

The Key Insight: Claude Cowork Needs a Framework to Reach Full Power

Here is the core insight most commentary misses.

Tools like Claude Cowork are not dangerous because they are powerful.

They are dangerous when they are powerful without structure.

Claude Cowork, directed by individual users solving individual problems, will:

- Hardcode logic because it is locally optimal

- Optimise for task completion, not system coherence

- Encode assumptions directly into outputs

- Solve the immediate problem with no incentive to generalise

- Create solutions that cannot be reused, extended, or governed

This is not a flaw in the tool. It is exactly what the tool is designed to do. It maximises local productivity. It does not—and cannot—maximise organisational coherence.

Inside a composable decision framework, the equation changes entirely.

Claude Cowork becomes a composer, not a constructor. It assembles existing primitives. It configures parameterised components. It creates instances of governed patterns.

- It accelerates reuse instead of duplication

- It enforces consistency without central bottlenecks

- It enables safe automation

- It allows intelligence to scale in scope, not just speed

The hardest part is not generating actions. It's coordinating them.

In real operations, agents will optimise competing goals: safety, throughput, cost, maintenance risk, compliance. A composable decision framework provides the shared primitives and arbitration rules—objectives, constraints, and escalation paths—so that local actions don't fight each other in production.

Speed alone does not create advantage.

Speed with structure does.

The relationship is multiplicative, not additive. A composable decision framework does not slow down tools like Claude Cowork. It unlocks their full power by ensuring that what they build can be absorbed, governed, and scaled.

The Autonomy Trajectory: Why This Gets Worse Before It Gets Better

There is one more dimension to this problem that most discussions ignore.

Today, executable fragmentation shows up as conflicting dashboards and incompatible reports. That is painful, but it is visible. Humans are still in the loop. The dysfunction surfaces in meetings where people argue about whose numbers are right.

Tomorrow, it shows up as conflicting automated actions.

Executable fragmentation stops being a reporting problem and becomes an action problem.

When organisations begin to grant AI agents the authority to act—not just report—the cost of fragmentation multiplies. One agent throttles production based on its definition of risk. Another agent increases output based on its definition of opportunity. Neither knows the other exists. Both are following their local logic correctly.

The result is not confusion. It is operational chaos.

If your organisation doesn't have a single place where decision logic is composed, versioned, and governed, you don't have agent readiness. You have agent potential.

This is the trajectory we are on. And the organisations that wait until automated actions conflict to impose structure will find that the structure they need cannot be retrofitted onto the chaos they have created.

The time to build the framework is before the agents start acting, not after.

What Leaders Should Do Differently Now

This moment requires a shift in how we think about enablement.

The wrong question: "Which AI tools should we allow?"

The right question: "What structure must exist before we unleash them?"

Here are concrete principles for leaders navigating this transition:

1. Don't standardise tools. Standardise primitives.

You will not win by controlling which AI tools people use. You will win by ensuring that whatever they build uses shared definitions, shared data models, and shared decision logic. The primitive is the unit of governance, not the tool.

2. Make reuse easier than reinvention.

If building from scratch is faster than finding and adapting an existing component, people will build from scratch. Always. Structure the environment so that composing is the path of least resistance.

3. Enforce parameterisation over hardcoding.

Every threshold, every assumption, every context-specific value should be an input, not a constant. This is not optional hygiene. It is the difference between solutions that scale and solutions that calcify.

4. Separate decision logic from execution.

The rules that determine what should happen must be visible, auditable, and changeable independently of the code that makes it happen. When logic is buried in execution, governance becomes archaeology.

5. Treat AI-built solutions as system components, not scripts.

If it affects production data, production processes, or production decisions, it is part of the system. It needs lifecycle management, version control, observability, and clear ownership. The fact that Claude built it in thirty seconds does not change this.

These are architectural choices, not governance slogans. They require investment, tooling, and cultural change. But they are the only path to capturing the value of code democratization without being destroyed by it.

The Uncomfortable Conclusion

The biggest enterprise risk of AI is not hallucination. It is not bias. It is not job displacement.

It is executable fragmentation at machine speed.

AI didn't make organisations faster. It made fragmentation cheaper.

Claude Cowork does not threaten organisations because it enables building.

It threatens organisations that lack the structural discipline to absorb what gets built.

Every team will move faster. Every team will solve their own problems. Every team will ship. And the enterprise will slowly drown in solutions that do not talk to each other, do not share definitions, do not compose, and cannot be governed.

The winners in this next phase will not be the organisations that automate the fastest.

They will be the ones who pair execution abundance with architectural restraint.

Because in the end, the future is not about who can build more.

It is about who can scale intelligence without scaling chaos.

The question for every leader is simple: When your people have the power to build anything, what ensures that what they build makes the organisation smarter, not just busier?

If you can't answer "where does our decision logic live?"—you already have the problem.

The tools won't wait for you to find the answer.

Sources & References

- Anthropic, Claude Cowork: Research Preview Announcement (January 2026)

- Anthropic Help Center, Getting Started with Cowork

- TechCrunch, Anthropic's new Cowork tool offers Claude Code without the code

- VentureBeat, Anthropic launches Cowork, a Claude Desktop agent that works in your files

- Simon Willison, First impressions of Claude Cowork, Anthropic's general agent

- Public interviews and coverage referencing Claude Code's use in rapid internal development